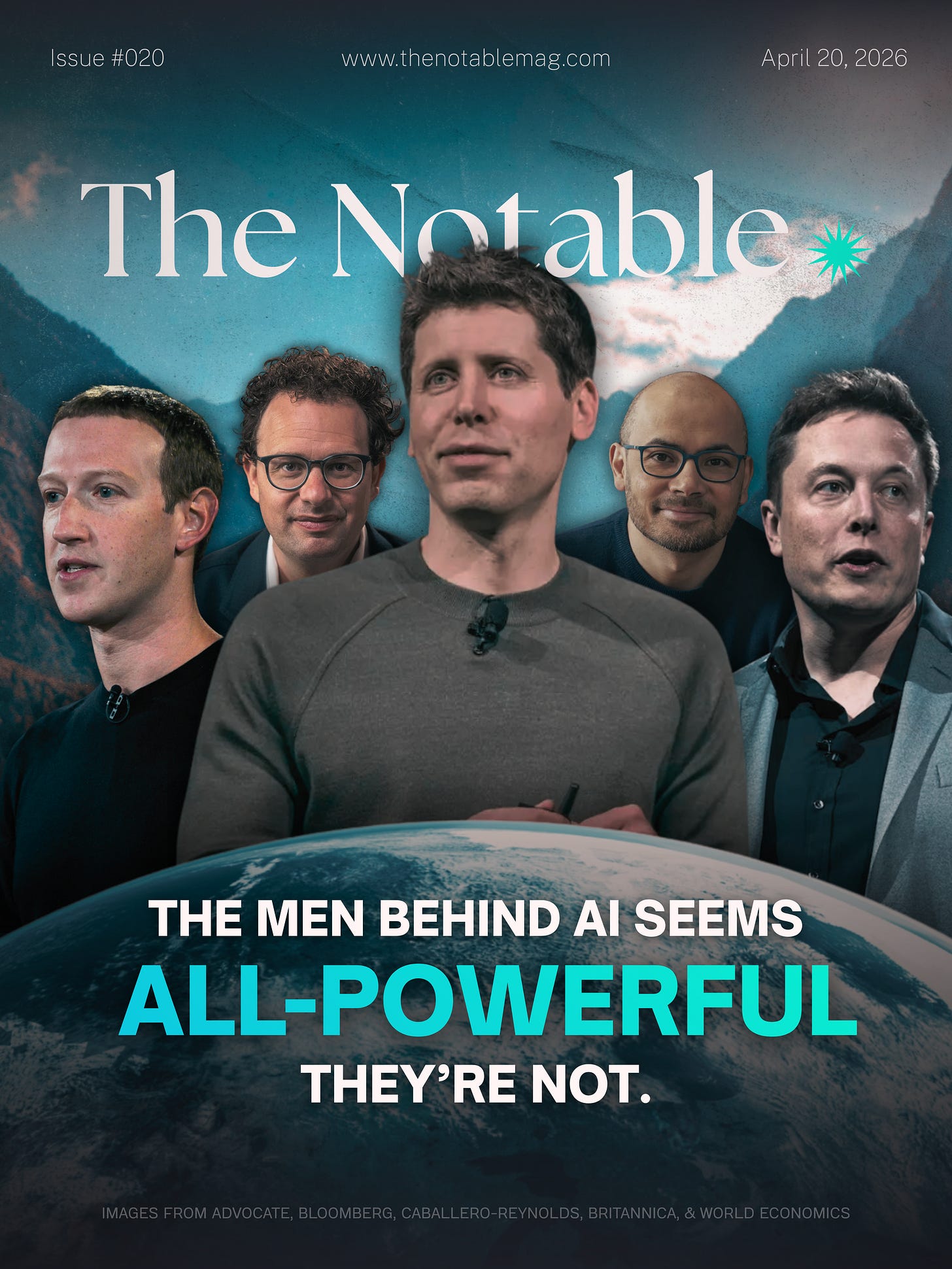

The men behind AI seem all-powerful. They’re not.

From OpenAI to Meta, influence is rising fast. But history shows real power comes from something deeper.

In the early years of the automobile, Henry Ford was not yet inevitable. The car existed before him. So did the idea of mechanised transport. What Ford built was something narrower and more consequential. A system that could scale, distribute, and embed the technology into everyday life.

Artificial intelligence now sits in a similar pre-condition.

A small group of executives has come to define the field’s direction. Sam Altman at OpenAI, Dario Amodei at Anthropic, Demis Hassabis at Google DeepMind, Elon Musk at xAI, and Mark Zuckerberg at Meta Platforms.

Their products reach hundreds of millions. Their decisions shape how governments, militaries, and corporations begin to use AI. Their visibility resembles that of earlier industrial titans.

But visibility is not power in the historical sense.

That distinction is beginning to matter.

The comparison to figures like John D. Rockefeller or Ford rests on a familiar pattern. New technologies often pass through a phase where a small number of individuals translate invention into dominance. Railways, oil, steel, and computing all followed this arc.

What elevated those figures was not invention alone. It was control over the system that made the technology indispensable.

Rockefeller did not just refine oil. He controlled refining, transport, and pricing through Standard Oil. Ford did not invent the car. He controlled production, wages, and distribution through Ford Motor Company.

Their power came from scale tied to structure.

AI has not reached that point.

At first glance, the numbers suggest momentum. OpenAI reports usage in the hundreds of millions. Meta Platforms is investing tens of billions into AI infrastructure. Microsoft has committed more than $10 billion into OpenAI. Amazon and Google are racing to expand cloud capacity to support AI workloads.

The system appears to be scaling.

But the underlying mechanics are fragmented.

Unlike oil or automobiles, AI does not yet rely on a single dominant industrial chain. It depends on a layered ecosystem. Semiconductor firms like Nvidia supply the computational backbone. Cloud providers operate the infrastructure. Model developers build systems. Enterprises integrate them into workflows.

No single actor controls the full stack.

Even the most visible AI leaders operate within constraints that earlier tycoons largely avoided.

Take control.

Ford owned his company outright. Rockefeller exercised near-total authority over Standard Oil. Their strategic decisions translated directly into system-wide outcomes.

By contrast, Sam Altman does not control OpenAI in that way. Its hybrid structure places ultimate authority with a non-profit board. That structure briefly removed him in 2023, exposing how governance can override leadership even at the center of the AI boom.

Dario Amodei operates within a company backed heavily by external capital, including Amazon and Google. Demis Hassabis leads a division within a larger corporate hierarchy.

Even Elon Musk, whose influence spans multiple industries, derives much of his structural power from Tesla and SpaceX rather than AI alone.

The pattern is consistent.

The individuals shaping AI are visible. But they are not structurally dominant.

The constraint is not only governance. It is economics.

Historical industrial power scaled through labor and physical capital. Ford’s factories employed hundreds of thousands. At its peak, Ford Motor Company represented a measurable share of the American workforce.

AI does not scale in the same way.

Model development requires highly specialized talent and vast computing resources, but relatively few employees. The economic footprint is concentrated in capital expenditure rather than labor expansion.

That changes how power accumulates.

A company that employs fewer people, even if technologically influential, does not embed itself into society in the same way. It does not shape wages, urban development, or supply chains at comparable scale.

It influences decisions rather than structures.

For now, that distinction keeps AI leaders below the threshold reached by earlier industrial magnates.

Yet the trajectory is not static.

What AI lacks in structural integration, it compensates for in horizontal reach.

Earlier technologies transformed specific sectors. Railways reshaped transport. Oil powered industry. Electricity redefined manufacturing.

AI moves differently.

It inserts itself across sectors simultaneously. Finance, healthcare, defense, media, and education are all beginning to integrate AI systems into core processes. The technology does not replace a single industry. It modifies many at once.

That creates a different pathway to power.

Instead of dominating one sector deeply, AI firms may influence multiple sectors indirectly.

The system becomes less centralized and more distributed.

But that distribution introduces its own tension.

Because influence without control creates instability.

AI companies rely heavily on upstream dependencies. Nvidia dominates advanced AI chips, with a market share exceeding 80% in data center GPUs. Cloud providers like Amazon Web Services, Microsoft Azure, and Google Cloud control access to computing infrastructure.

This shifts leverage away from model developers.

Even as OpenAI or Anthropic build more advanced systems, they remain dependent on external platforms to deploy and scale them.

The result is a fragmented power structure.

No single entity fully captures the value chain.

At the same time, governments are moving earlier than they did in previous technological waves.

The European Union’s AI Act introduces risk-based regulation for AI systems. The United States has begun imposing export controls on advanced semiconductors, directly affecting companies like Nvidia and limiting access to markets such as China.

This is not the laissez-faire environment that allowed Standard Oil or early railroads to consolidate power with minimal resistance.

Regulation is arriving before dominance is fully established.

That compresses the window in which AI leaders can translate influence into structural control.

There is also a deeper misalignment.

The narrative around AI assumes inevitability. That the companies leading today will naturally become the dominant institutions of tomorrow.

History suggests otherwise.

Technological waves often separate early visibility from eventual control. Many of the firms that popularized early internet services did not become its dominant platforms. The same pattern appeared in electricity and aviation.

The decisive phase comes later.

It arrives when the system stabilizes around infrastructure, standards, and distribution.

AI has not reached that phase.

For now, the leading figures in AI occupy an ambiguous position.

They shape expectations. They influence policy debates. They guide early adoption.

But they do not yet control the system that determines how AI embeds into the economy.

That system is still forming across chips, cloud infrastructure, enterprise integration, and regulation.

And within that formation lies the unresolved tension.

Power in AI is clearly accumulating.

But it is accumulating in the wrong places to resemble the past.

Which raises a quieter possibility.

The next Rockefeller or Ford in AI may not be the one building the models at all.